I fell down a flight of stairs at 4 a.m. last Wednesday.

It was totally my fault.

Since then, I have used hospital emergency departments in 2 states, a freestanding imaging center and a large orthopedic clinic and I’m just getting started. Six days in, I’m lucky to be alive but I still don’t know the extent of my injuries, my chances of playing golf again nor what I will end up spending on this ordeal. But nonetheless, it could have been worse. I’m alive.

Surprises in all aspects of life are never anticipated fully and always disruptive. This one, for me, is no exception. I am frustrated by my accident and uncomfortable with sudden dependence on others to help navigate my recovery.

But this is also a teachable moment., As I am navigating through this ordeal, I find myself reflecting on the system—how it works or doesn’t—based on what I am experiencing as a patient.

Here’s my top three observations thus far:

The patient experience is defined by the support team:

The heroes in every setting I’ve used are the clerks, technicians, nurses and support staff who’ve made the experiences tolerable and/or reassuring. Patients like me are scared. Emotional support is key: some of that is defined by standard operating procedures and checklists but, in other settings, it’s cultural. Genuineness, empathy and personal attention is easy to gauge when pain is a factor. By the time physicians are on the scene, reassurance or fear is already in play. Care teams include not just those who provide hands-on care, but the administrative clerks and processes that either heighten patient anxiety or lessen fear. The health and well-being of the entire workforce—not just those who deliver hands-on care—matters. And it’s easy to see distinctions between organizations that embrace that notion and those that don’t.

Navigation is no-man’s land:

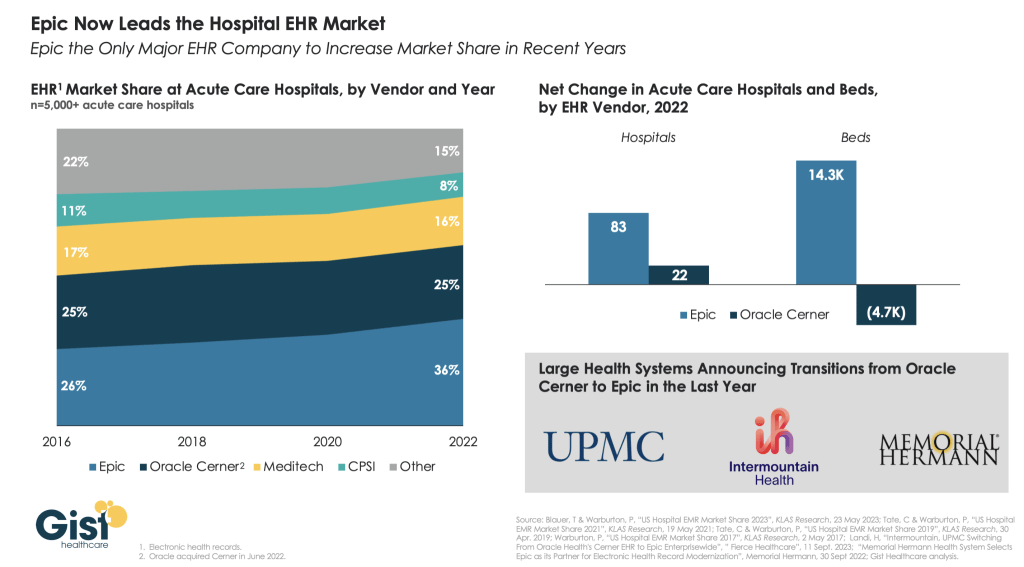

The provider organizations I’ve used thus far have 3 different owners and 3 different EHR systems. Each offers written counsel about ‘patient responsibility’ and each provides a list of do’s and don’ts for each phase of the process. Sharing test results across the 3 provider organizations is near impossible and coordination of care management is problematic unless all parties agree and protocols facilitating sharing in place. Perhaps because it was a holiday weekend, perhaps because staffing levels were less than usual, or perhaps because the organizations are fierce competitors, navigating the system has been unusually difficult. Navigating the system in an emergency is essential to optimal outcomes: processes to facilitate patient navigation are not in place.

What’s clear is hospitals, clinics and imaging facilities on different EHR systems don’t exchange data willingly or proactively. And, at every step, getting approvals from insurers a major step in the processes of care.

Price transparency is a non-issue in emergency care:

The services I am receiving include some that are “shoppable” and many that aren’t. I have no idea what I will end up spending, my out-of-pocket obligations nor what’s to come. I know among the mandatory forms I signed in advance of treatment in all 3 sites were consent forms for treatment and my obligation for payment. But in an emergency, it’s moot: there’s no way to know what my costs will be or my out-of-pocket responsibility. So, the hospital and insurer price transparency rules (2021, 2022) might elevate awareness of price distinctions across settings of care but their potential to bend the cost curve is still suspect.

Patients, like me, have to fend for ourselves. I am a number. Last Wednesday, waiting 85 minutes to be seen was frightening and frustrating though comparatively fast. Duplicative testing, insurer approvals, work-shift transitions, bedside manners, team morale, and sterile care settings seem the norm more than exception.

So, for me, the practical takeaways thus far are these:

- Don’t have an accident on a holiday weekend.

- Don’t expect front desk and check-out personnel to engage or answer questions. They’re busy.

- Don’t expect to start or leave without paying something or agreeing you will.

- Don’t expect waiting areas and exam rooms to be warm or inviting.

- Do have great neighbors and family members who can help. For me, Joe, Jordan, Erin and Rhonda have been there.

The health system is complicated and relationships between its major players are tense. Not surprisingly and for many legitimate reasons, my experience, thus far, is the norm. We can do better.

Paul

P.S. As I have reflected on the event last week, I found myself recalling the numerous times I called on “my doctors” to help my navigation of the system. They include Charles Hawes (deceased), Ben Womack, Ben Heavrin, David Maron, David Schoenfeld and Blake Garside. And, in the same context, the huge respect I have for clinicians I’ve known through Vanderbilt and Ohio State like Steve Gabbe and Andy Spickard who personify the best the medical profession has to offer. Thanks gentlemen. What you do matters beyond diagnoses and treatments. Who you are speaks volumes about the heart and soul of this industry now struggling to re-discover its purpose.